Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

There’s a version of this conversation that happens on almost every healthcare product team at some point. Engineering wants to move fast. Legal and compliance want everything locked down. The product is stuck in the middle, trying to ship features. And somewhere in that tension, someone says the words that will haunt the release cycle: “We’ll add HIPAA compliance before launch.”

That sentence is where the trouble starts….

Because HIPAA compliance isn’t a layer you apply on top of a finished product. It’s a set of architectural decisions: about how you store data, how you control access, how you respond to incidents, and those decisions shape everything downstream. Try to retrofit them after the fact, and you’re not adding compliance; you’re rebuilding.

An honest counterpoint: compliance doesn’t have to kill velocity. The teams that do this well, healthcare app development companies in the USA like Tech Exactly, have figured out how to bake compliance into the development lifecycle without turning every sprint into an audit exercise. That’s what this blog is about.

HIPAA stands for the Health Insurance Portability and Accountability Act, enacted in 1996 and updated significantly through the HITECH Act. It sets the national standard for protecting sensitive patient health information, which the regulation calls Protected Health Information, or PHI, and it applies to any covered entity (healthcare providers, health plans, clearinghouses) and their business associates that handle this data.

For developers and product teams, HIPAA isn’t one rule. It’s a set of interlocking rules:

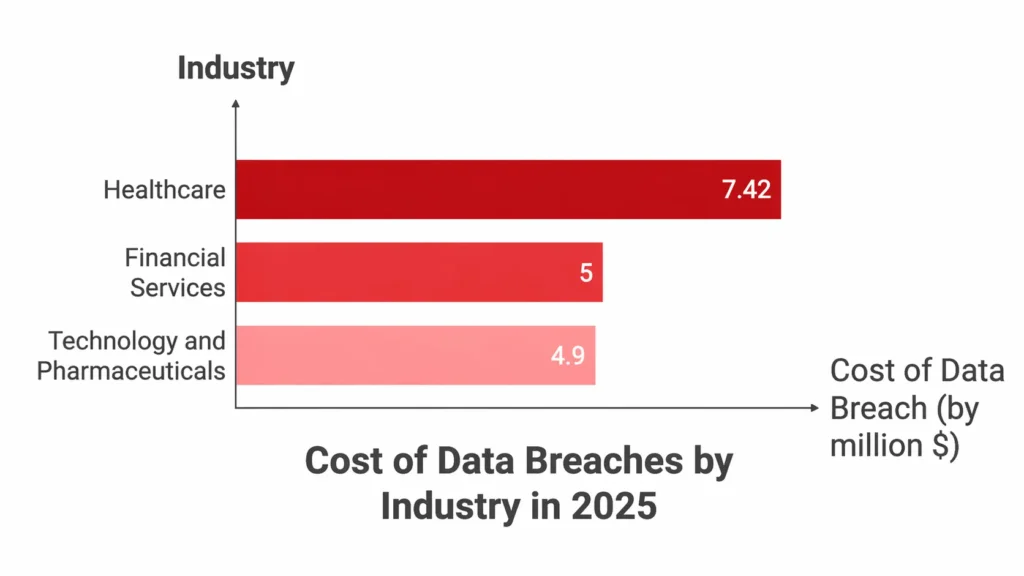

In 2024, the Office for Civil Rights (OCR) closed 22 HIPAA investigations with financial penalties, collecting $16 million in fines, and enforcement activity has stayed high into 2025. The average cost of a healthcare data breach is now $7.42 million, according to The HIPAA Journal Report. And healthcare has been the costliest industry for data breaches for 14 consecutive years.

That’s the environment your app is being built in.

Before we get into the how-to, it’s worth understanding what triggers HIPAA violations in the real world because most of them aren’t caused by hackers. They’re caused by architecture decisions that seemed fine at the time.

Here are the most common HIPAA violation examples we see in healthcare app development:

Every single one of these is a development and architecture issue masquerading as a compliance failure. And every single one is preventable.

Here’s the thing nobody wants to say out loud: the reason teams delay compliance work isn’t laziness. It’s that compliance feels like it conflicts with speed. Stand up a database, build the feature, ship it, and deal with the paperwork afterwards.

The problem is that compliance isn’t paperwork. It’s plumbing. And just like plumbing, you cannot add it to a finished building without tearing walls open.

When teams come to us at Tech Exactly after the fact healthcare startups in New York City, growing telehealth platforms, telemedicine software companies trying to pass their first audit, the rework cost is almost always higher than the original build would have been. We’ve seen products that needed complete data model rewrites because PHI was stored alongside operational data with no separation. We’ve seen access control systems that had to be rebuilt from scratch because they were designed around features, not roles. We’ve seen integrations with third-party analytics tools that turned every user session into an unintentional PHI disclosure.

The irony is that building compliance from the start actually enables faster development at scale. Because you’re not making architecture decisions you’ll have to undo later. Compliance-first teams ship faster in months three through twelve because they’re not carrying technical debt from month one.

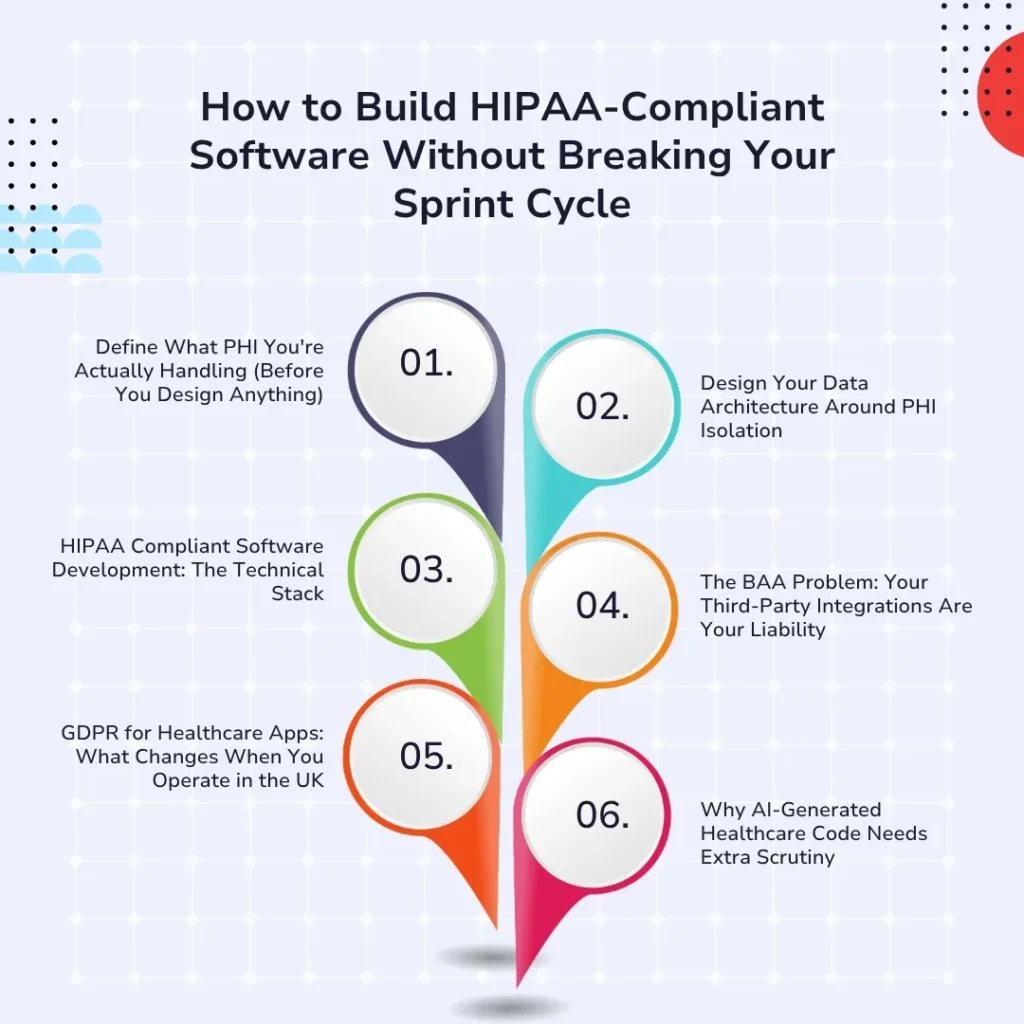

There’s a structured way to do this one that integrates compliance checkpoints into your existing development workflow rather than sitting outside it as a separate process. Here’s how we approach it across our healthcare mobile app development services.

This sounds obvious. It rarely gets done rigorously.

PHI isn’t just medical records. Under HIPAA, it’s any individually identifiable health information, which includes names combined with diagnoses, appointment dates, insurance IDs, IP addresses linked to health interactions, and more. Your data classification model needs to explicitly define what in your product qualifies as PHI, where it lives, who touches it, and what controls apply to it.

Without this map, every subsequent decision: your database schema, your logging strategy, your analytics setup, your third-party integrations, is being made without the information it needs. And that’s how you end up with tracking pixels in patient portals.

Do this work in week one. It will shape everything else, and it takes far less time than the retrofit will.

One of the most impactful compliance-by-design decisions is data isolation, keeping PHI separated from your operational and analytics data at the infrastructure level, not just at the query level.

In practice, this means:

This is particularly critical for online telemedicine platforms and telehealth platforms that are HIPAA compliant, where consultation recordings, session metadata, and clinical notes all need to be stored under PHI-level controls even when the underlying video infrastructure is a commodity service.

Compliance intentions only matter if they’re implemented in the code. Here’s what HIPAA-compliant software development actually looks like at the architecture level:

Encryption

Access Control

Audit Logging

CI/CD Integration

It’s the baseline from which you build every feature. When it’s baked in this way, adding a new feature doesn’t require a compliance review; it inherits compliance from the underlying architecture.

This is the compliance failure that catches even well-intentioned teams off guard. If any third-party service in your stack- your analytics platform, your push notification provider, your error monitoring tool, your video SDK touches PHI, you need a signed Business Associate Agreement (BAA) with them before a single byte of patient data flows through their systems.

No BAA means any PHI disclosure through that vendor is a HIPAA violation. Yours. Not theirs.

The common culprits:

The fix is straightforward: build a vendor PHI map as part of your compliance architecture, require BAAs before integration, and review it every time you add a new service. The operational overhead is minimal. The consequence of skipping it is not.

For a full breakdown of how to approach vendor and integration compliance, we’ve written this up in detail: How to Build Regulatory Compliant Apps

US teams often assume GDPR is just a stricter version of HIPAA. It isn’t; the frameworks have genuinely different philosophical foundations, and those differences have real technical implications.

GDPR for healthcare apps in the UK introduces:

Any healthcare app development company in the UK building products that also serve US patients or US companies expanding into NHS-adjacent services needs separate compliance tracks for each market. Same product, different data governance layer. We’ve written a deep dive on exactly where these frameworks diverge: Healthcare App Compliance in USA & UK: HIPAA, GDPR, FDA, UKCA

This one is recent and genuinely important. As “vibe coding”, using AI tools to generate production code quickly, becomes standard practice, healthcare teams are discovering a new compliance risk: AI-generated code that works but isn’t compliant.

AI code generation tools don’t have a HIPAA awareness layer. They’ll generate database queries that expose PHI in logs. They’ll scaffold authentication systems without MFA. They’ll suggest analytics integrations that create accidental disclosures. The code compiles, the tests pass, and then you hit your first HIPAA audit.

This doesn’t mean you shouldn’t use AI tools in healthcare development; you absolutely can and should. It means every AI-generated component that touches PHI needs an explicit compliance review pass before it’s merged. Not a vibe check. A documented review against your compliance architecture. We’ve written specifically about this risk and how to manage it: Vibe Coding a Healthcare App? Here’s What Happens at Your First HIPAA Audit

A therapy provider came to Tech Exactly needing a full patient-facing web platform, appointment booking, secure messaging with therapists, session notes, and billing integration. The challenge wasn’t complexity; it was sensitivity. Mental health records carry additional protections beyond standard PHI, and the client operated in multiple US states with different state-level privacy requirements layered on top of HIPAA.

We built the platform with PHI isolation as the foundational architecture decision, mental health notes stored in a separately encrypted data store with strict access scoping (therapists could only access their own patients’ records, not the full system). Consent management was built directly into the onboarding flow with granular per-purpose tracking. The billing integration used a HIPAA-compliant payment processor with a signed BAA, and session metadata: timestamps, session IDs, and duration were sanitized before flowing to any analytics layer.

The result was a platform that passed HIPAA review without a single architectural change post-build, launched in eight weeks, and gave the client a compliant foundation to scale to new states and additional therapist cohorts without triggering new compliance events.

Full case study here: HIPAA-Compliant Website for a Therapy Provider

Run through this before any PHI-touching feature goes to production:

Data Architecture

Access & Authentication

Third-Party Integrations

Audit & Incident Readiness

GDPR (for UK/EU deployments)

The teams that treat HIPAA compliance as a development constraint end up slower. They’re constantly negotiating between what they want to build and what the regulation allows, and they’re always one audit away from a rebuild.

The teams that treat compliance as architecture end up faster. They make the hard decisions once, upfront, and then every subsequent feature is built on a foundation that already knows how to handle PHI, who can access what, and what to do when something goes wrong.

That’s the shift in mindset that separates healthcare products that scale from ones that stall.

If you’re building a healthcare app, whether you’re a healthcare startup in New York City, a growing telemedicine software company, or an enterprise health system, and you want to get HIPAA compliance right without it becoming a drag on your development cycle, Tech Exactly’s healthcare mobile app development team has done this across the USA and UK markets.

Build it right. Build it once.

Q1. What does HIPAA stand for, and does it apply to my app?

HIPAA stands for the Health Insurance Portability and Accountability Act. It applies to your app if you’re a covered entity (healthcare provider, health plan, or clearinghouse) or a business associate handling PHI on your behalf. If your app stores, transmits, or processes individually identifiable health information, including appointment data, diagnoses, prescriptions, or insurance details, HIPAA applies.

Q2. What are the most common HIPAA violation examples in app development?

The most common issues we see are: PHI exposed through misconfigured analytics tools (tracking pixels, Google Analytics), missing Business Associate Agreements with third-party vendors, disabled MFA that creates unauthorized access, no documented risk analysis, and delayed breach notification. Most are architecture failures, not security failures.

Q3. How is GDPR for healthcare apps different from HIPAA?

HIPAA is PHI-centric and US-specific. GDPR is rights-centric; it gives individuals more control over their data, requires more granular consent, mandates a 72-hour breach notification window (vs. HIPAA’s 60 days), and includes rights like erasure and data portability that require technical support in your product. If you’re operating in both the US and UK, you need both compliance tracks running independently.

Q4. Can we use AI coding tools for HIPAA-compliant software development?

Yes, but with explicit review gates. AI tools don’t generate HIPAA-aware code by default. They’ll miss logging sanitization, skip MFA in auth scaffolds, and suggest integrations that create PHI exposure. Every AI-generated component touching PHI needs a documented compliance review before it’s merged. AI speeds up development; compliance review prevents it from creating problems downstream.

Q5. What’s the minimum we need to do to be HIPAA compliant before launch?

There’s no real “minimum”, the Security Rule requires a comprehensive set of administrative, physical, and technical safeguards, and the first thing OCR checks is whether you’ve done a risk analysis at all (76% of 2025 enforcement actions included a risk analysis failure penalty). The practical minimum is: data classification, PHI-isolated architecture, encryption at rest and in transit, RBAC with MFA, audit logging, signed BAAs with all vendors, and a documented breach notification process.

Q6. We’re a healthcare startup in New York City. Do state laws add to HIPAA requirements?

Yes. New York has its own SHIELD Act and Department of Health regulations that layer additional requirements on top of HIPAA. In 2025, the New York AG imposed a $500,000 fine on a healthcare provider for cybersecurity failures under state law, not HIPAA. Any healthcare product operating in New York needs to account for both federal and state requirements.

Q7. Does HIPAA compliance apply to telehealth platforms differently than regular healthcare apps?

The same rules apply, but the implementation points are different. For telehealth platforms that are HIPAA compliant, the specific focus areas are: video infrastructure (requires a BAA with your provider), session recording storage (PHI-level controls required), cross-state provider licensing, and digital consent management built into the encounter flow, not just in onboarding documents.